In the past few years, generative artificial intelligence (AI) has swept across this nation’s college campuses like a plague, leaving nary a classroom or dorm room free from its influence. In fact, just sit in the back of a classroom and observe what happens for a few minutes. You’ll probably see students open up ChatGPT to complete an assignment, passing it off as their own work.

Depending on who you ask, the high rate of AI usage on college campuses — a 2025 study found that as many as 71% of students have used generative AI — is either the end of academia or the dawn of a new, brighter educational era. Generative AI usage has had its fair share of both merits and drawbacks. While studies have shown that programs like ChatGPT can “enhance traditional teaching methods” by providing students with immediate feedback on writing tasks or by drafting succinct and accessible summaries of complex texts and ideas, they also reveal that these same programs decrease critical thinking skills, pass off inaccurate information as true and pose major ethical problems when it comes to plagiarism, authorship and racialized, heteronormative information bias.

This editorial, however, is not the place to hash out this invariably complex debate. What this editorial will do is address the fact that a significant volume of this AI usage comes in direct contradiction to educators’ wishes, with many professors prohibiting students from using the programs. For instance, Fordham University’s website includes a page that not only provides educators with tools to catch generative AI usage but also provides a list of “Fordham-supported AI Tools for Learning and Productivity” that notably does not include programs like ChatGPT. Anti-AI usage messaging can likewise be found littered across Fordham professors’ syllabi, with one syllabus from an upper-level art history course reading, “It is a basic and obvious expectation that you will not use AI (ChatGPT, etc.) for any work related to this course. The use of AI will result in an automatic failing grade for the assignment and may be forwarded to the University’s Academic Integrity Committee.”

Considering that students are using generative AI while often being directly told not to by faculty and staff, it then seems that a major shift in pedagogy, i.e., the ways in which professors approach the actual act of teaching, is certainly in store. More specifically, it is the opinion of the editorial board of The Fordham Ram that every university, Fordham included, should fundamentally readjust their approach to AI usage in educational settings, embracing it as an educational tool in a context that forces students to bring in and think critically about their own respective scholarly knowledge.

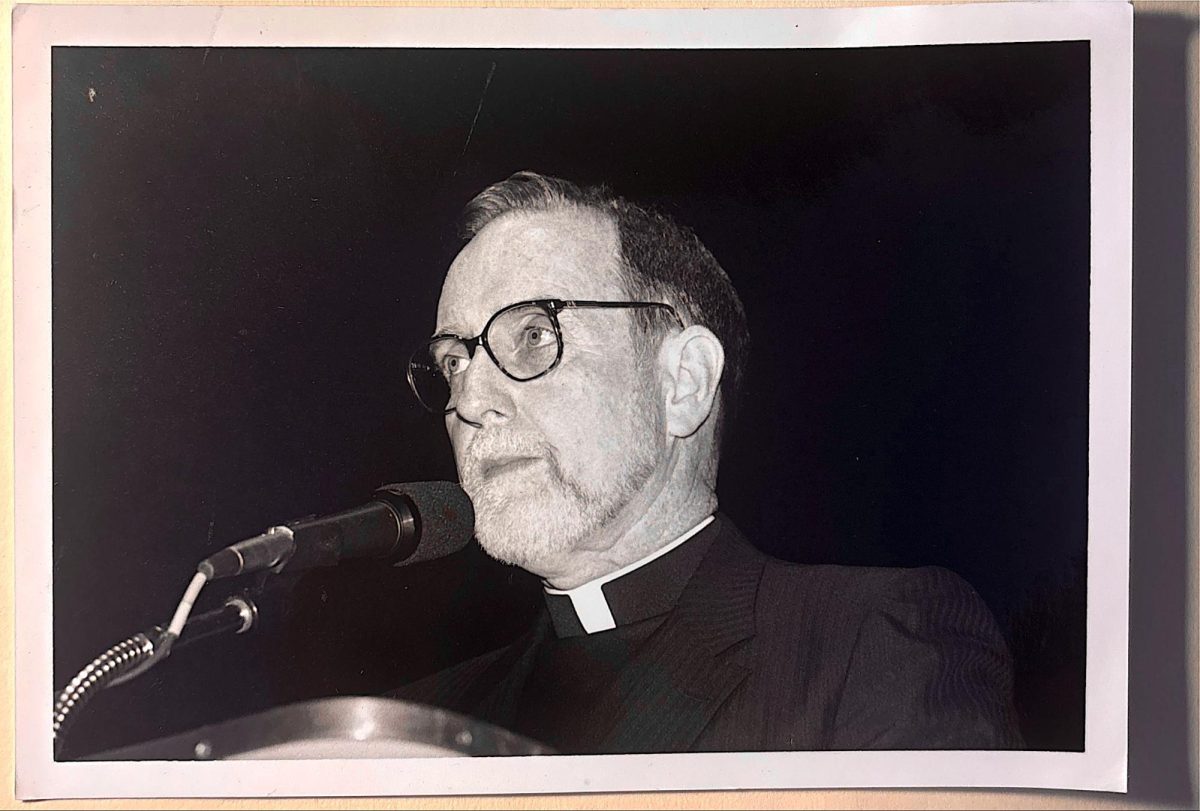

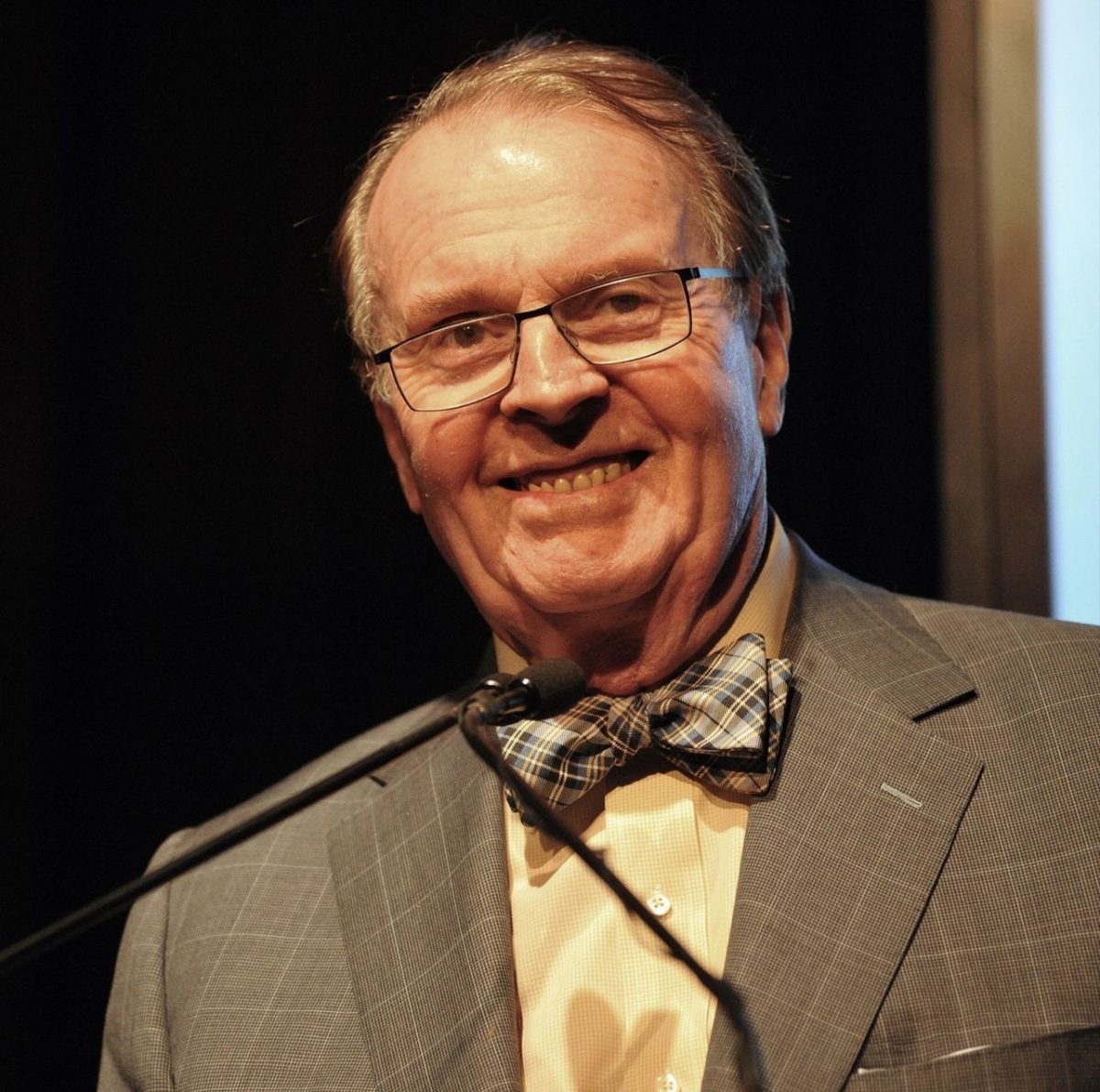

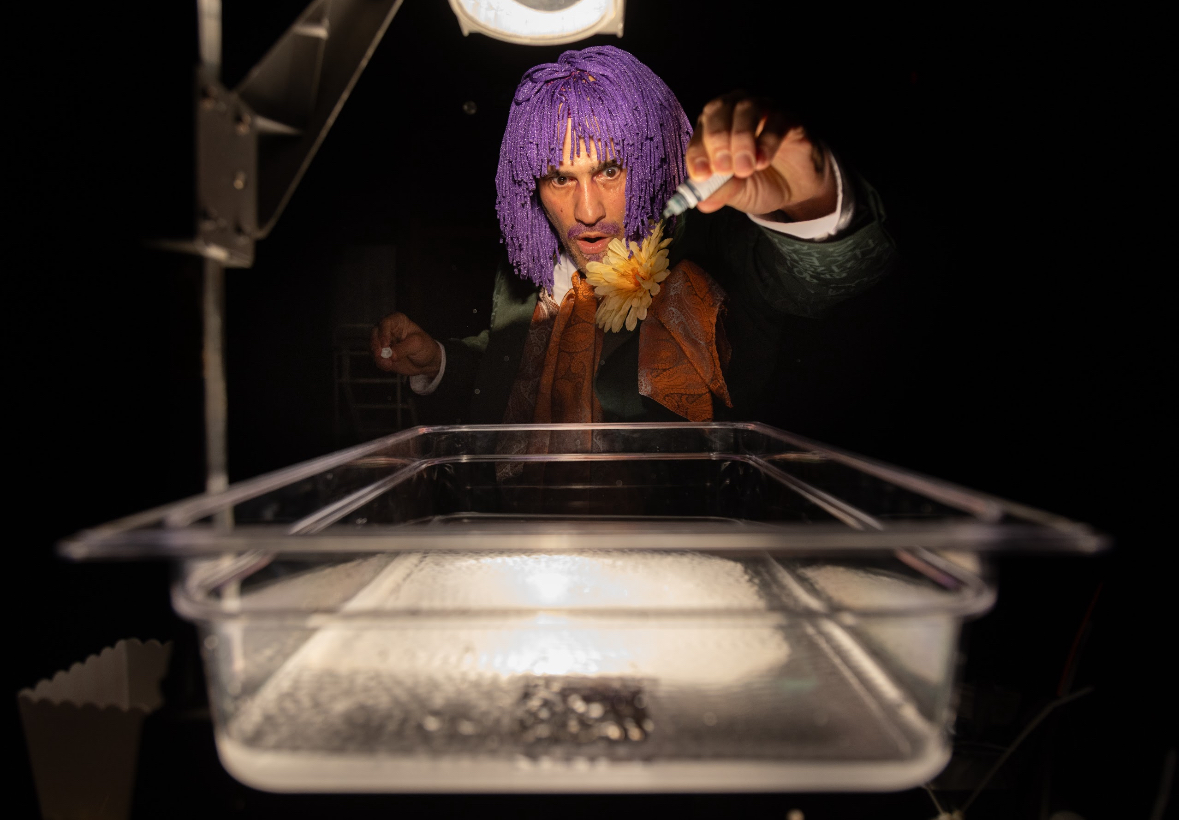

Fordham may very well already have a blueprint for adopting such an approach to the lurking presence of generative AI: Michael Peppard, Ph.D., is a longtime professor of theology here at Rose Hill. This past academic year, Peppard introduced a fascinating approach to generative AI usage in his courses by directly asking his students to use generative AI when completing certain assignments. However, this does not mean that students should simply drop an essay prompt into a dialogue box and then submit whatever they receive from the AI software. Instead, Peppard asks students to engage in a critical back-and-forth dialogue with the generative AI program by crafting a series of highly intentional and discerning prompts that test the program’s scholarly knowledge surrounding a specific topic. After this “conversation” with the program, Peppard then requires that students write a reflection on their respective experiences, critiquing the accuracy and insightfulness of the responses that the generative AI program provided.

Peppard succinctly described this process of “require and critique” in an op-ed for Bloomberg, saying that “[f]or some assignments, students will use generative AI and then, as their evaluated work, offer higher-order criticisms of its outputs based on other sources and inputs from our course.”

What this process does is productively acknowledge that students are going to use generative AI. In other words, if students are going to use these generative AI programs regardless of whether or not they are forbidden from doing so, then professors might as well ensure their usage demands some critical thinking on the student’s part. Asking students to not only create probing prompts that test the programs’ knowledge but also ask them to critique the adequacy of the received responses is a great way to critically engage with AI. It requires that the students bring their own learned knowledge to the table; after all, any critical engagement with a database’s knowledge must necessarily stem from knowledge beyond the scope of what the database can provide.

To be sure, this is not the only way in which professors may choose to utilize generative AI in their pedagogy. They may not only choose to apply programs to their curriculum in wholly different ways (e.g., encouraging its use for summation purposes), but they also, of course, reserve the right to attempt to find a novel way to mitigate its usage. After all, generative AI does indeed carry with it concerns about academic integrity and its potential to devitalize the minds and motivations of students.

Beyond these questions about the ethics and efficacy of AI usage in academic circles, we at The Ram believe that any and all discourse about generative AI is woefully incomplete without acknowledging that any extended usage of these programs does significant damage to the environment. Programs like ChatGPT or Adobe Firefly pose an existential threat to our well-being, as their most basic operations consume ridiculous amounts of electricity and water while simultaneously producing concerning levels of carbon dioxide emissions. Research even shows that “[w]ithin years, large AI systems are likely to need as much energy as entire nations.” Thus, regardless of whether shifts in pedagogy are implemented, and regardless of whether they are effective or not, the fact of the matter is that all this generative AI usage is coming at the cost of our home: the natural world.